Nvidia It is not a name that is very familiar to the average consumer, but it is on its way to becoming one as important as that of Manzana either Google. In fact, it recently surpassed Google in market capitalization and amazon and became the fourth most valuable company in the world behind Microsoft, Manzana and Saudi Aramco. And what does Nvidia manufacture to have experienced this rapid rise in recent months? The processors necessary to run the artificial intelligence models and those that yesterday presented a new model that greatly improves the performance of the successful H100.

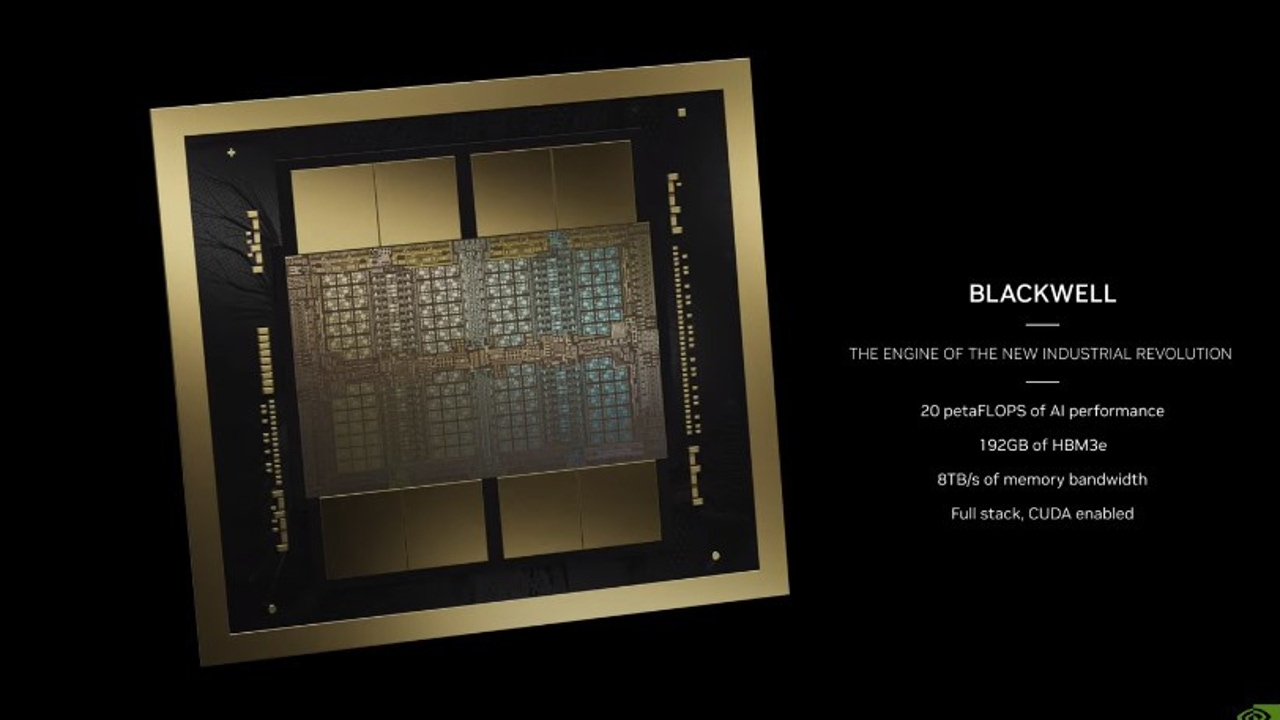

The American company has announced at its annual developers conference the B200 GPU and the GB200 superchipboth based on the new architecture Blackwell and that offer significant improvements in performance and consumption compared to the H100 GPUs that companies like Meta, Microsoft, Google and X have purchased for hundreds of thousands to use with their artificial intelligence models.

The measure of the generational leap is given by the number of transistors that Nvidia has managed to integrate into each processor. The 80,000 million of the H100, launched in 2022, rise to 208 billion transistors in B200. And with them the computing power in FP4 operations reaches 18 petaFLOPS if the GPU is air cooled and twenty if the cooling is liquid.

The GPU has a bandwidth of 8TB/s and Nvidia offers configurations with up to 192 GB VRAM memory. But just as important for large companies that buy this type of technology is the power as consumption, which Nvidia has reduced by up to 25 times compared to the H100which represents significant savings in the data centers that use them.

On the other hand, the GB 200 'superchip' announced by Jensen HuangCEO of Nvidia, integrates two B200 GPUs with a 72-core Grace CPU. This combination allows achieving maximum performance of 40 petaFLOPS in FP4 operations and supports up to 384GB memory HBM3.

What do these numbers mean in practice? To train a model with 1.8 trillion parameters would have previously required 8,000 H100 GPUs and 15 megawatts of power. Huang has assured that can be done with 2,000 B200 GPUs and consuming only four megawatts.

The new Blackwell architecture used by the B200 and GB200 will not only remain in the chips for artificial intelligence, but will also will be carried over to the next generation of graphics cards for the final consumer.

Nvidia has been a leading company in this field, whose main buyers are video game fans, for three decades. But this success falls short compared to the growth that the company is experiencing thanks to producing the hardware necessary for artificial intelligence. In January 2023, its shares were 176 dollars. Just over a year later, $857 and with no signs that he has found his roof.